Every day, millions of us log onto social media and video‑sharing platforms believing we’re stepping into a global conversation. We scroll, we comment, we argue, we hit the “like” button. We assume that we are always engaging with other real human account holders but increasingly, we’re not.

In the past decade, social media has become the world’s public square. The arena where political debates unfold, cultural trends emerge, and news spreads at lightning speed. As these platforms have grown, so has a hidden force shaping what people see, think, and feel. There is a silent swarm of automated accounts taking over the posts we interact on, known simply as “bots”. These digital actors now play a central role in influencing online discourse, often without users realizing it.

What Exactly Are Bots?

Automated accounts, which were once clumsy, obvious, and easy to ignore, have evolved into sophisticated digital actors capable of shaping public opinion at a scale no human influencer could dream of. They’re doing it quietly, relentlessly, and with astonishing effectiveness. They can post content, like and share posts, follow accounts, comment on videos, and even engage in conversations. While some bots are harmless and serve useful purposes, such as customer service chatbots or news aggregators, others are engineered specifically to manipulate public opinion.

According to reporting on recent investigations, bots have been used to flood platforms with coordinated messages, amplify certain narratives, and distort the perceived popularity of ideas or movements. Research also shows that these bots can blend seamlessly into social networks, making them difficult for everyday users to detect the psychological impact the bots can have on the formulation of their own opinions.

How Bots Are Used on Hip-Hop/Pop Media Platforms to Shape Public Opinion

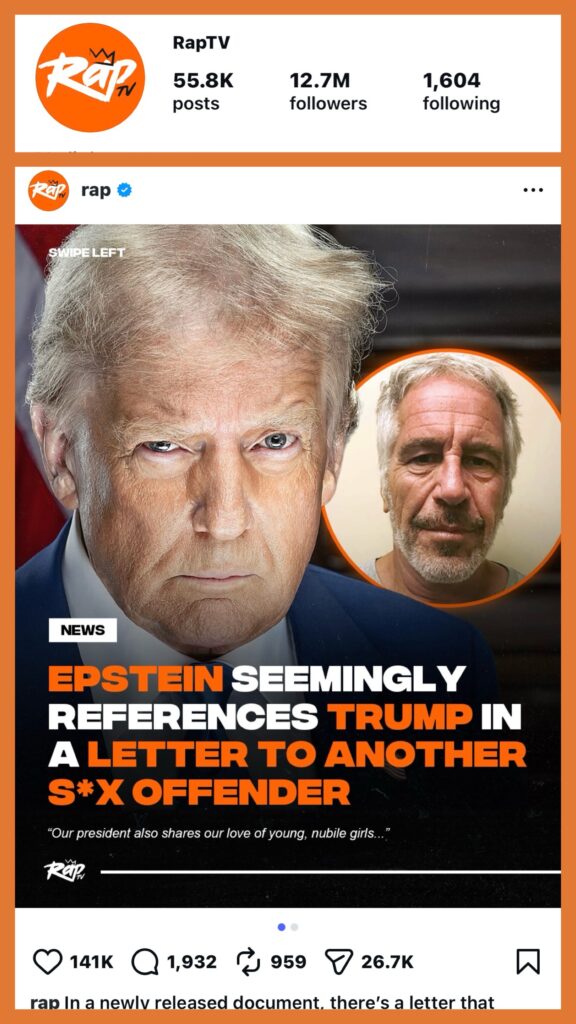

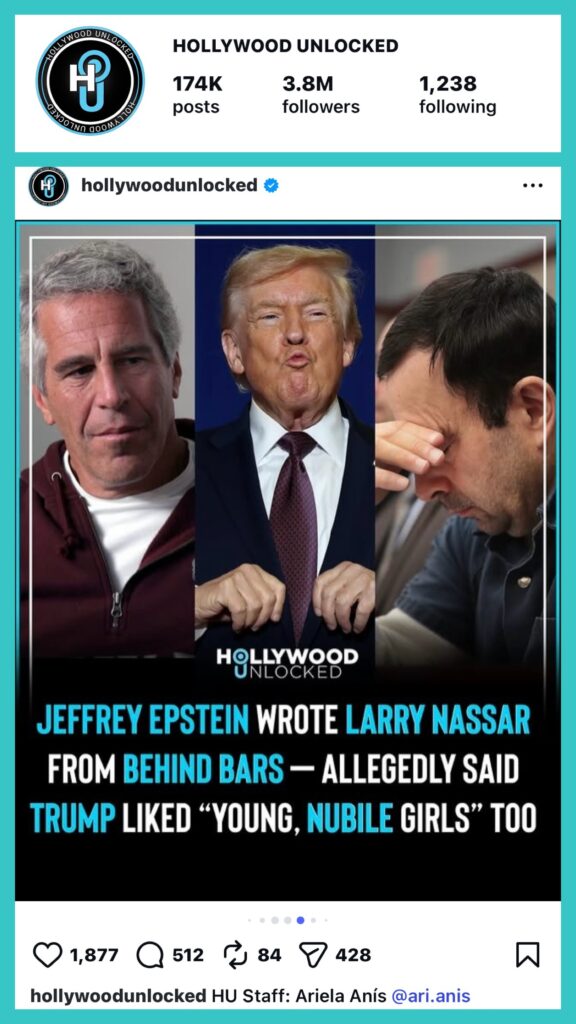

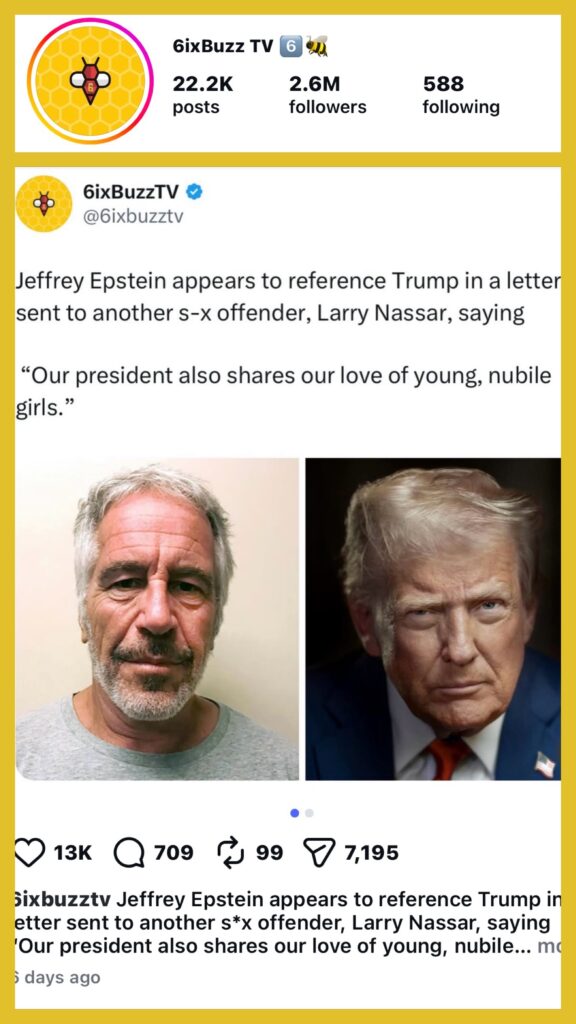

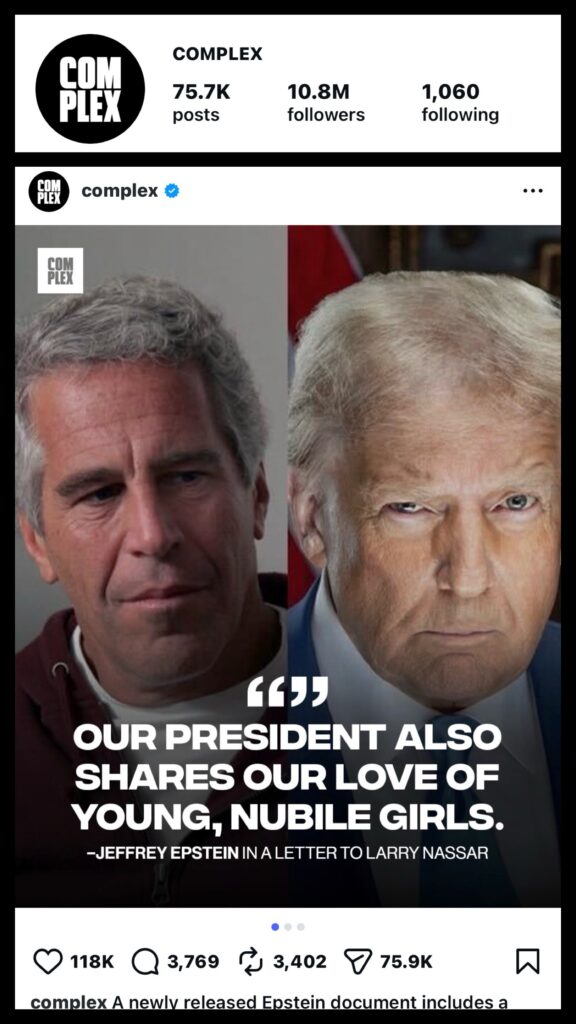

Often bot farm operations are funded by criminal organizations, foreign governments and various interest groups and used to target media platforms with large follower counts that post content created to attract younger and often politically unsophisticated account users. This type of information warfare is highly coordinated and designed to rapidly spread false or misleading information, to make fringe narratives appear more popular or mainstream.

Platform owners will accept considerable sums of money from these rouge networks in order to weaponize their posts against their own followers and subscribers. How often have you scrolled through your feed only to be inundated with posts with similar clickbait captions from multiple pop culture accounts all seemingly posting at around the same time. Once the posts are up, in come the swarm of bot accounts with their programmed curated comments.

A New Kind of Propaganda Machine

The Illusion of Consensus.

Humans are social creatures. We take cues from the crowd. If a video racks up thousands of comments pushing a particular narrative, or if a post suddenly trends out of nowhere, we instinctively assume there’s genuine momentum behind it. Bots exploit this instinct.

They manufacture the appearance of consensus. A tactic known as astroturfing. Flooding platforms with coordinated messages. They can make fringe ideas look mainstream, amplify misinformation, or drown out legitimate voices. On video sharing platforms, bots can help inflate views and engagement, tricking algorithms into promoting content that never earned its popularity. When the crowd is fake, our perception of reality becomes malleable and easier to distort.

Traditional propaganda required armies of people. Today, a single operator with a bot network can influence political debates, cultural conflicts, or public health discussions across multiple platforms simultaneously. Bots don’t sleep. They don’t get tired. They don’t question orders. Bots can be weaponized to overwhelm or intimidate individuals. Coordinated bot swarms may target journalists, activists, or political candidates with harassment, drowning out their voices and discouraging participation in public discourse.

They can be used to foment crowdsourcing operations to assist criminal organizations towards locating an intended target for assassination or help organize mob actions in online communities that are against law enforcement personnel. Bots don’t need to convince you of anything directly. They just need to make you doubt. Doubt what’s real. Doubt what’s popular. Doubt whether your voice matters in the noise. When public discourse becomes polluted with artificial amplification, democratic debate becomes harder to trust. When trust erodes, it becomes easier to divide and conquer.

Let’s Recap the Five Objectives of Bot Operations

The silent bot swarm isn’t just an annoyance. It’s a structural threat to how societies form opinions, make decisions, and understand themselves.

Here are the agendas of social media bot psychological operations.

1. Amplifying Misinformation

Studies highlight how bots inject misinformation into online discussions and help it travel faster and farther than organic content. On video‑sharing platforms, this can involve mass‑commenting on videos, boosting misleading clips, or manipulating recommendation algorithms.

2. Astroturfing Movements

Astroturfing refers to creating the illusion of widespread grassroots support. Bots can generate thousands of posts supporting a political cause, attacking a public figure, or promoting a conspiracy theory. In some cases, bot networks have been deployed around major political events or protests to sway public sentiment or sow confusion.

3. Shaping Trending Topics

Trending lists on platforms like X (formerly Twitter), TikTok, Instagram or YouTube are highly influential. Bots can artificially inflate engagement metrics such as likes, shares, views and comments. This tactic can essentially push certain topics into trending sections. Once a topic trends, real human users often join the conversation, giving the manufactured narrative genuine momentum.

4. Harassment and Silencing

Bots can be weaponized to intimidate a person. Coordinated bot swarms may target someone with harassment or violent threats. Criminal organizations may employ the use of bots to attack a target on social media, reasoning their organization can maintain anonymity and evade law enforcement action.

5. Algorithm Manipulation

Recommendation systems rely heavily on engagement signals. Bots can distort these signals by mass‑engaging with specific content, tricking algorithms into promoting videos or posts that appear popular. This tactic is especially potent on video platforms, where watch‑time and engagement drive visibility.

Combating Bot Manipulation Is Like Platforms Playing A Game of Whack‑A‑Mole

Platforms and researchers are working to detect and dismantle bot networks, but the challenge is immense. Bots are becoming more sophisticated, often using AI to generate human‑like language and behavior. Meanwhile, the incentives for deploying them tend to be political influence, financial gain and ideological warfare.

Social media platforms often take down bot networks, tweak algorithms, and publish transparency reports. The truth continues to be uncomfortable. Which is: bots evolve faster than platforms can respond. As long as social media remains central to public life, bots will remain a powerful and contested force.

- BunnaB, Dina Ayada & Diamond XO: Three out of our Top Ten Female Artists to Watch

- MidwesthubTV – Watch Latest Hip Hop Visual Playlist

- Bots Swarm Social Media Twisting Truth into Chaos

- Is The Music Industry A Laundromat for Criminal Organizations?

- How AI Music Artists Are Revolutionizing The Industry